AI of the future: ‘Generative adversarial networks’ (GANs)

Advancements in artificial intelligence (AI) in the past decade have been tremendous. Machines can now differentiate between humans (even twins!), recognize your voice with utmost precision, and can model any data you provide for analysis and prediction of future trends. But all of this is meager compared to what the next-generation AI can do.

Machines can now generate text, images, and even music that is indistinguishable as fake to an average human. This is possible using a breakthrough idea called the Generative Adversarial Networks by Ian Goodfellow. To understand GANs and their applications better, let’s dive into a very brief introduction to the basic building blocks of a GAN.

Decoding GANs:

GANs stands for generative adversarial networks. As the name adversarial suggests, there are two adversaries in the network that constantly try to better the other. To understand this intuitively, consider that you want to learn and get better at playing chess. Typically, you would learn the basics and then play with someone who is better than you, learn from your mistakes and your opponent’s moves, and improve yourself in every game. That is exactly what GANs do. There are two networks in a GAN:

- A generative network that takes a random noise input and generates images/text or any data, depending on our application.

- A discriminative network that discriminates the output from the generator as true or false.

These two networks are at war with each other. The generative network learns to create more believable data to fool the discriminator while the discriminator learns to better differentiate false and true samples. The discriminator is also fed with actual samples from a dataset so that it can learn how the true samples look like. For example, we can train the discriminator with a dataset of face images if we want to generate artificial face images. With the basic working of a GAN brushed up, let’s see one in action.

GANs in action:

| One most common dataset used to test machine learning algorithms is the MNIST dataset which includes a set of handwritten digits from 0 to 9. |  |

These are 28×28 images with the background in black and the digit itself in white. So now, let’s train this on a GAN and see if it’s able to generate realistic digits.

Keras-dcgan is used for this demo. Within a few hundred epochs (an epoch is one full run of the training algorithm), we can see pretty good images. A sample set of generated images is shown below.

A set of generated images are put together in a single image for analysis. We can see that the network really has learned how most numbers look. The gif below clearly shows how the system gets better after every few training iterations. The numbers the network generates are not something directly taken from the dataset and spit out. They are actual images generated from scratch. Not impressed yet? It just generates some random numbers, right? Then look at this next application where a GAN generates artificial images of objects.

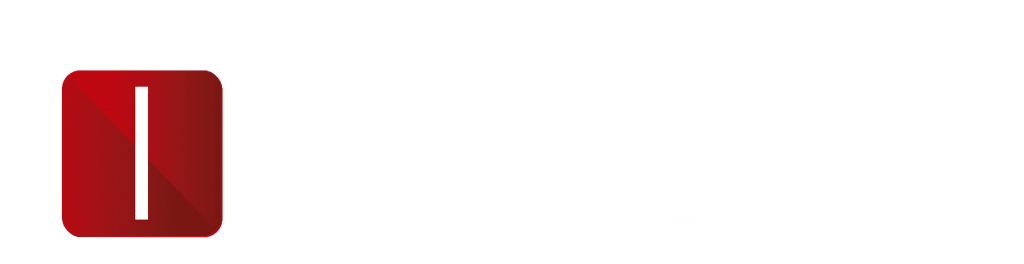

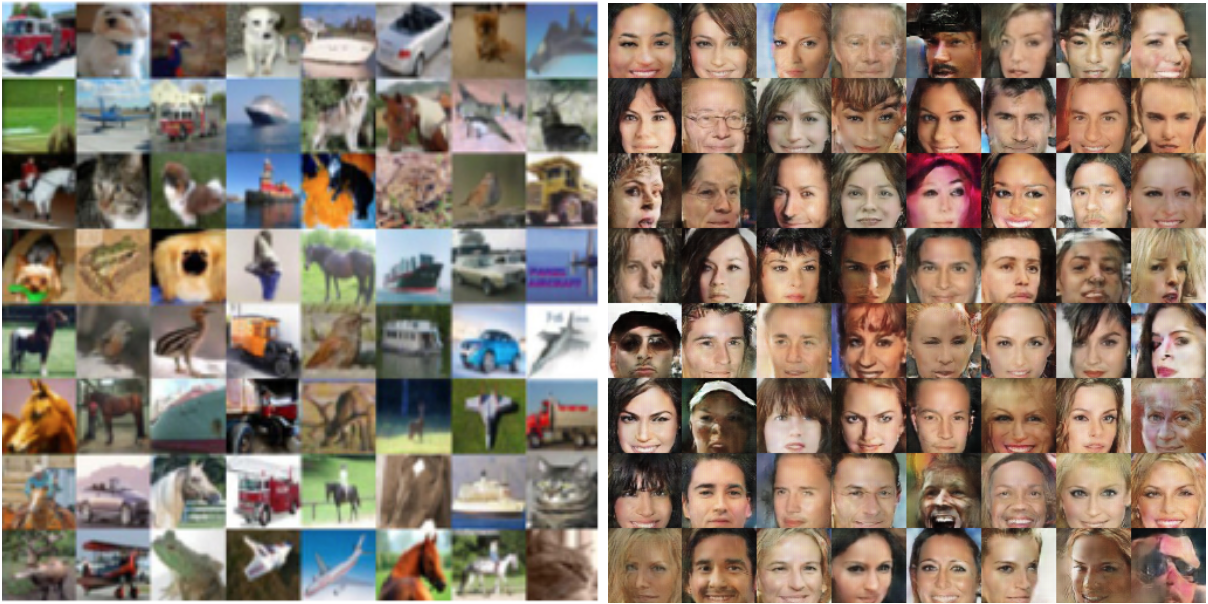

A GAN is trained here with the CIFAR10 dataset, which is a collection of 10 classes of objects, including animals, birds, flowers, motor vehicles, etc. This is how this DCGAN network performs for the CIFAR10 dataset, where it learns to generate fake images of birds, animals, and vehicles.

Source: code, DCGAN_code

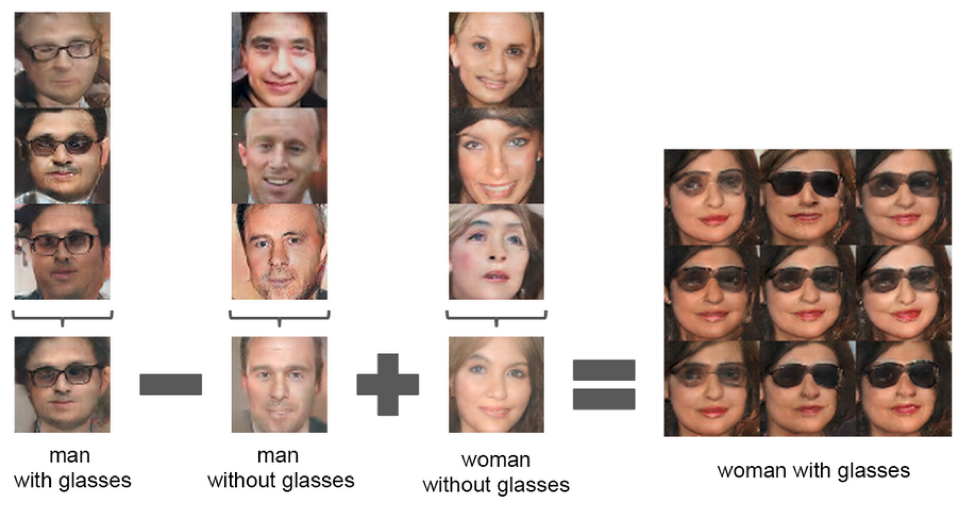

The network just generates random images of what it has learned to fake. It can also generate faces of people who technically do not exist on the planet (or do they?). Most faces are skewed and disoriented, but there are also faces that actually look realistic and appealing. Another funny application is we can even do algebra on the faces.

Arithmetic on faces: DCGAN_code

Still not impressed? AI generates realistic images of fake people and animals. Big deal. Pfffft.

All the images I’ve shown here so far are fake ones a GAN generated. But the only control we have over the generated images is through the input noise vector fed to the generator. For different noise vectors, it generates different faces or objects. We really don’t have control here on generating the exact face or the exact object we want. However, a GAN can deal with that too.

What if I told you that a GAN can generate an image of something that’s not even naturally available when you ask for it specifically? For example, you can ask for an image of a “small yellow bird with a brown crown and yellowtail,” and the network would give one for you.

These are the images that the network stack-GAN generated for the above text. Again, these are not images that the generator picked from the dataset matching our text and thrown out. These are images that the generator drew as per our text. Astonishing and scary at the same time, right?

Other applications of GANs:

The above-mentioned applications were mostly of images because it’s easier to understand the effectiveness of GANs with a visual representation. Below are other influential applications of a GAN:

1. Drug discovery and cancer research:

A user can train the generator on available drugs for existing illnesses to generate new possible treatments for incurable diseases. Researchers proposed an adversarial autoencoder (AAE) model for the identification and generation of new compounds based on available biochemical data.

Results of this work are the following: the trained AAE model predicted compounds that are already proven to be anticancer agents and new, untested compounds that should be validated with experiments on anticancer activity.

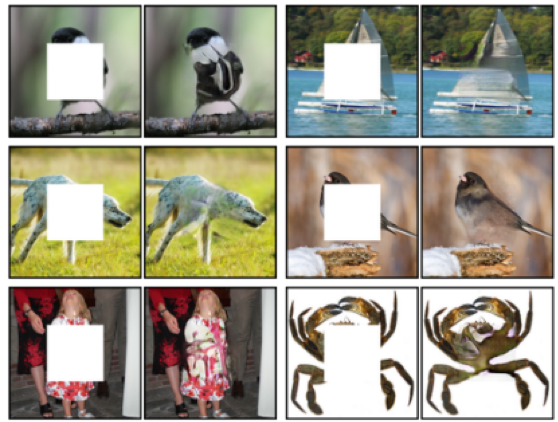

2. Image inpainting/completion:

Imagine that you had someone photobomb your favorite picture and you couldn’t do anything about it. You can now remove them from the picture using GANs for image inpainting. Example:

Illustration from this paper.

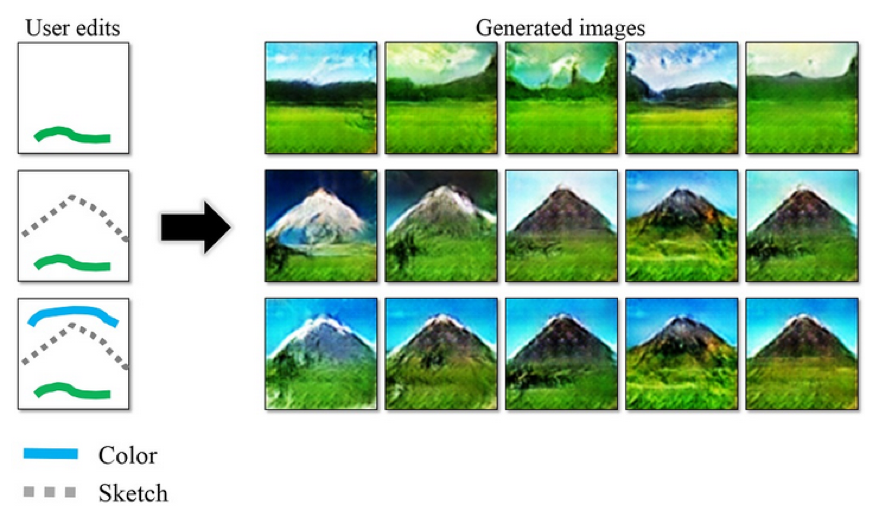

3. Interactive visual manipulation:

As shown below, GANs can create drawings/paintings with simple strokes.

Source: project

Semantic manipulation is amazing and frightening at the same time, as shown in this video:

4. Anomalous tissue detection:

GANs can be used for tissue analysis to find anomalies like in this paper, where results on optical coherence tomography images of the retina demonstrate that the approach correctly identifies anomalous images, such as images containing retinal fluid or hyperreflective foci.

5. Music and video generation

GANs can also generate artificial music and videos. Below is an example of a gif a GAN has generated from a still image.

Source: project

Drawbacks and ongoing research:

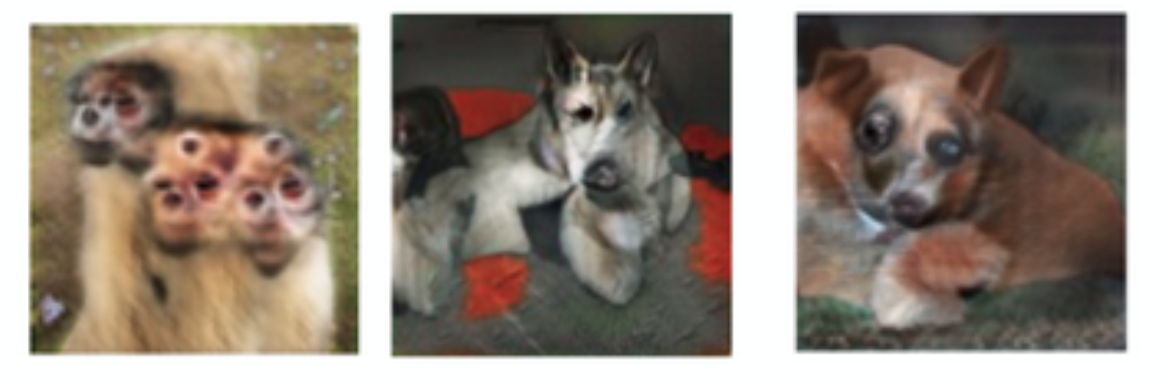

While the previous GAN-generated images were nearly perfect, the same network generated the images below. GANs fail to adapt with 3D objects, miss perspective, and sometimes fail to differentiate how many of a particular attribute should appear in an object.

Source: GAN

GANs are highly unstable, and they require the proper design to prevent one network from overpowering the other inside a GAN. Stabilizing GANs is ongoing research. Facebook’s WassersteinGAN shows promising improvements in GAN stability by using a different loss function. In naive terms, we are still not completely into “what GANs can do for us,” as we are still debating “what we can do for GANs” to make them stable.

But the future of GANs looks bright, and in no time, we could see machine-generated code, music, videos, and even essays and blogs. However, I can assure you this blog post wasn’t written by a GAN (or was it?).

This post may or may not have been written by Infolob’s resident AI guru, Sailesh Krishnamurthy. But you can definitely reach him at [email protected]. Sailesh’s next article can be read here.